Link analysis tools become interactive knowledge level query interfaces…

Dropping the other shoe

Introducing nested entangled parsers and Carmot-E in particular…

Heteromorphic Languages

A new programming paradigm that blows away today’s programming languages…

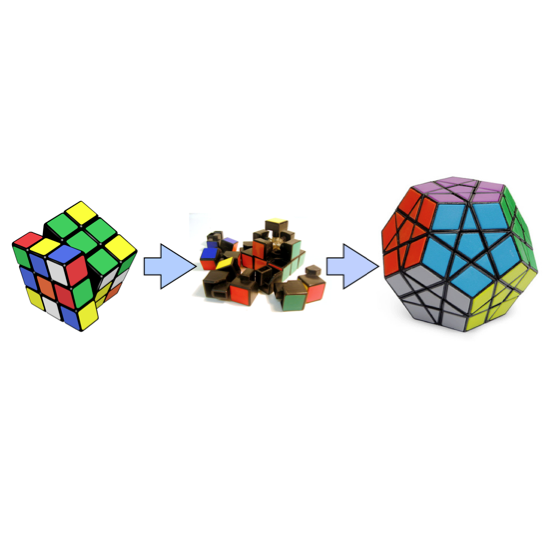

Data Integration – a new way

MitoMine™ is Mitopia’s ontology-based next generation data integration and mining suite…

Data Integration – the old way

Today’s DI tends to be weak, destructive, and non-adaptive…

The GUI interpreter layer

The benefits to be gained by passing GUI behaviors through an interpreted GUI language outweigh any possible timing overhead…

An adaptive GUI abstraction

Sadly GUI frameworks arise from an interest in UI…

Software Evolution

Why is it that large program life expectancy is just a few short years…

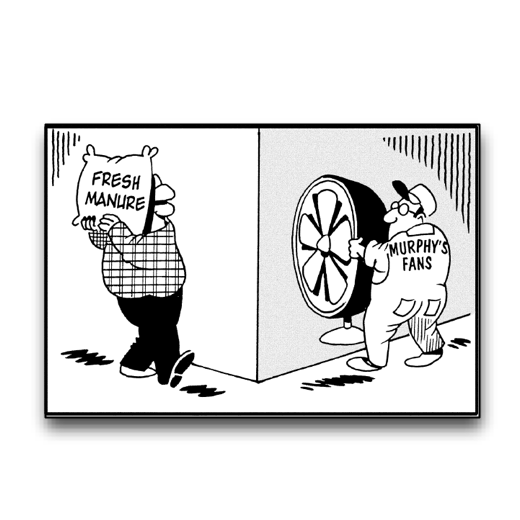

When stuff hits the fan…

Robust error handling in an adaptive system requires a holistic approach…

MitoQuest™ Queries

In the previous post we introduced the MitoQuest™ plug-in which is the principal member of the MitoPlex™ federated server architecture. In this post we will look in more detail at …

The MitoQuest™ plug-in

As described previously, MitoPlex™ is the federation layer of Mitopia’s persistent storage (i.e., distributed database ) model and MitoQuest™ is the principal and most pervasive of the federated containers. MitoQuest™ is directly …

MitoPlex™ Queries

In the previous post, we introduced the overall architecture of Mitopia’s MitoPlex™ server federation. In this post I want to look at the architecture of MitoPlex™ queries in a little …

The MitoPlex™ Federation

MitoPlex™ is a federated, ontology-driven replacement for today’s databases…

Representing field types

In the previous post we examined how Mitopia’s GUI auto-generation capability handles the representation of structures and sub-structures and the fields within. In an earlier post we looked at how …

Just the fields please Ma’am

In the previous post we looked at how Mitopia® auto-generates the GUI appropriate for examining and navigating a collection of nodes, in this post we will begin to look at …